If you need to capture screenshots of websites programmatically, you have two main options: spin up your own headless browser with Puppeteer, or call a screenshot API and get the image back in seconds. Both work - but the right choice depends entirely on your use case, team size, and how much infrastructure you want to manage.

This guide breaks down both approaches honestly, with real code examples, so you can make the right call for your project.

What is Puppeteer?

Puppeteer is a Node.js library maintained by Google that provides a high-level API to control Chromium or Chrome over the DevTools Protocol. It can navigate pages, click elements, fill forms, intercept network requests, and - most relevantly - take screenshots.

const puppeteer = require('puppeteer');

const browser = await puppeteer.launch();

const page = await browser.newPage();

await page.setViewport({ width: 1280, height: 800 });

await page.goto('https://example.com', { waitUntil: 'networkidle2' });

const screenshot = await page.screenshot({ type: 'png' });

await browser.close();

Puppeteer gives you full browser control. You can intercept requests, inject JavaScript, emulate devices, handle authentication flows - the full spectrum. It is the go-to tool when you need to automate a browser, not just photograph a URL.

What is a Screenshot API?

A screenshot API is a managed HTTP service that runs the headless browser infrastructure for you. You send a POST request with a URL (and optional parameters), and the API returns a PNG, JPEG, PDF, or video. No browser installed locally, no Chromium binaries, no memory management.

curl -X POST "https://screenshotcore.com/api/v1/screenshot" \

-H "Authorization: Bearer YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"url": "https://example.com",

"format": "png",

"viewport_width": 1280,

"viewport_height": 800,

"wait_until": "networkidle0"

}'

The API handles retries, browser pool management, caching, scaling, and Chromium version updates. You get a screenshot. Done.

The Core Trade-off

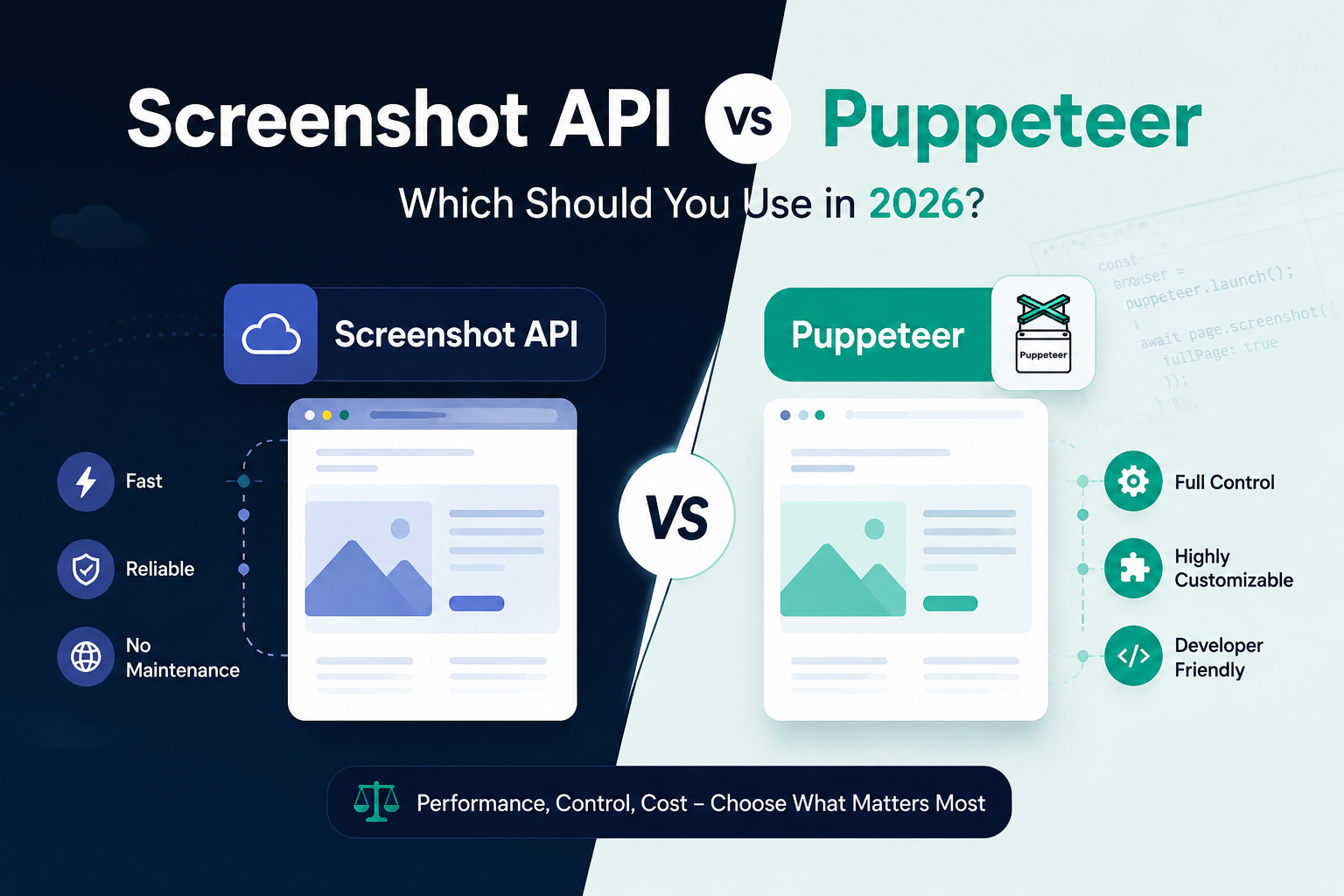

The fundamental difference is control vs. simplicity:

- Puppeteer gives you a full programmable browser. You control every millisecond of the lifecycle.

- A screenshot API gives you a clean HTTP interface. You control parameters, not the browser itself.

Neither is universally better. The question is what your project actually needs.

When Puppeteer is the Right Choice

1. You need complex multi-step browser automation

If you need to log into a site, navigate through several pages, interact with a dynamic UI, and then screenshot the result - Puppeteer is the right tool. A screenshot API's request/response model doesn't fit multi-step workflows.

2. You're already running Node.js infrastructure

If you have a Node.js backend and the screenshot is one small part of a larger automation pipeline, adding Puppeteer as a dependency is lightweight. The context-switch cost to an external API isn't worth it.

3. You need network interception

Puppeteer can intercept and mock network requests, which is invaluable for testing - capturing screenshots with specific API responses stubbed out, for example.

4. You have very low volume and tight cost requirements

At a few dozen screenshots per day, Puppeteer on a small server is essentially free. The operational overhead is low at that scale.

When a Screenshot API is the Right Choice

1. You need to scale beyond a single server

Chromium is memory-hungry. A single instance uses 200–400 MB of RAM. If you need to handle hundreds or thousands of screenshots per hour, managing a Puppeteer cluster - with queuing, worker processes, crash recovery, and autoscaling - becomes a significant engineering project. A screenshot API scales that for you.

2. You're building in any language other than JavaScript

Puppeteer is Node.js-only. If your backend is Python, PHP, Go, Ruby, or anything else, you'd need a separate Node.js service just to run Puppeteer. A screenshot API is a simple HTTP call from any language.

import requests

response = requests.post(

"https://screenshotcore.com/api/v1/screenshot",

headers={"Authorization": "Bearer YOUR_API_KEY"},

json={"url": "https://example.com", "format": "png"}

)

with open("screenshot.png", "wb") as f:

f.write(response.content)

3. You need reliable production uptime

Puppeteer in production crashes. The Chromium process runs out of memory, hangs on certain pages, or hits OS-level limits. Building reliable retry logic, zombie process cleanup, and health monitoring is non-trivial work. A screenshot API's SLA handles that.

4. You want features beyond raw screenshots

Modern screenshot APIs support full-page capture, PDF export, video recording, dark mode emulation, geolocation spoofing, custom CSS/JS injection, ad blocking, and webhook delivery out of the box. Implementing all of that in Puppeteer yourself would take weeks.

5. You want built-in caching

Screenshot APIs typically cache results by URL and parameters. If you're generating screenshots of the same pages repeatedly (monitoring, thumbnails, OG images), caching can cut your response times from 3–8 seconds to under 100ms and reduce costs dramatically.

Side-by-Side Comparison

| Factor | Puppeteer (self-hosted) | Screenshot API |

|---|---|---|

| Setup time | Hours to days (infra, queuing, monitoring) | Minutes (API key + one HTTP call) |

| Language support | Node.js only | Any language |

| Scaling | Manual (cluster, queue, autoscale) | Automatic |

| Maintenance | High (Chromium updates, crash recovery) | None |

| Cost at low volume | Very low (server cost only) | Low (pay-per-capture plans) |

| Cost at high volume | Infrastructure + engineering time | Predictable per-screenshot pricing |

| Browser control | Full | Parametric (URL, viewport, timing, etc.) |

| Reliability | Depends on your implementation | Managed SLA |

| Features (PDF, video, caching) | Build yourself | Built-in |

The Hidden Cost of Puppeteer in Production

The code to take a screenshot with Puppeteer is five lines. The production system around it is not:

- A queue to prevent memory spikes from concurrent captures

- Worker process management (pm2, Docker, Kubernetes)

- Graceful shutdown and zombie process cleanup

- Memory limit enforcement per browser context

- Chromium version pinning and update strategy

- Timeout handling for pages that never finish loading

- Alerting when the service falls behind the queue

This is real engineering work. For teams whose core product is not browser automation infrastructure, the time spent here is time not spent on your actual product.

A screenshot API lets your team focus on your product. The browser infrastructure is someone else's problem.

A Practical Decision Framework

Ask yourself these questions:

- Do I need to interact with the page (login, click, fill a form) before screenshotting? → Puppeteer

- Am I building in Node.js and screenshots are one small piece? → Puppeteer or API (either works)

- Do I need more than ~100 screenshots/day reliably? → Screenshot API

- Is my backend Python, PHP, Go, Ruby, or anything non-Node? → Screenshot API

- Do I need PDF export, full-page capture, or video recording? → Screenshot API

- Do I want zero infrastructure to manage? → Screenshot API

Getting Started with a Screenshot API

If you've decided an API fits your use case, here's what a production-ready integration looks like in Node.js:

const response = await fetch('https://screenshotcore.com/api/v1/screenshot', {

method: 'POST',

headers: {

'Authorization': 'Bearer YOUR_API_KEY',

'Content-Type': 'application/json',

},

body: JSON.stringify({

url: 'https://your-target-site.com',

format: 'png',

viewport_width: 1440,

viewport_height: 900,

wait_until: 'networkidle0',

full_page: true,

block_ads: true,

cache_ttl: 3600,

}),

});

if (response.ok) {

const buffer = await response.arrayBuffer();

fs.writeFileSync('screenshot.png', Buffer.from(buffer));

}

The cache_ttl parameter means repeated captures of the same URL return instantly from cache - critical for use cases like thumbnail generation or website monitoring at scale.

Conclusion

Puppeteer is a powerful tool for browser automation. If you need full programmatic browser control for complex multi-step workflows, it's the right choice. But for the most common use case - capturing screenshots of URLs at scale, in any language, with zero infrastructure overhead - a screenshot API wins on every dimension that matters in production: reliability, speed, maintainability, and developer time.

The five-line Puppeteer snippet is seductive. The production system around it is not. Know which one you're actually signing up for before you choose.